A WIRED investigation reveals that despite GitHub's efforts to combat the spread of deepfake porn, numerous repositories containing code for creating nonconsensual intimate imagery continue to evade detection. The investigation highlights the challenges of moderating open-source software and the persistence of abuse even after platforms take action.

In late November, a deepfake porn maker claiming to be based in the US uploaded a sexually explicit video to the world’s largest site for pornographic deepfakes, featuring TikTok influencer Charli D’Amelio’s face superimposed onto a porn performer’s body. Despite the influencer presumably playing no role in the video’s production, it was viewed more than 8,200 times and captured the attention of other deepfake fans.

“So nice! What program did you use for creating the deepfake??” one user going by the name balascool commented. “I love charli.” D’Amelio’s agent did not reply to a request for comment. The video’s creator, “DeepWorld23,” has claimed in the comments that the program was a deepfake model hosted on developer platform GitHub. This program was “starred” by 46,300 other users before being disabled in August 2024 after the platform introduced rules banning projects for synthetically creating nonconsensual sexual images, aka deepfake porn. It became available again in November 2024 in an archived format, where users can still access the code. GitHub’s crackdown is incomplete, as the code—along with others taken down by the developer site—also persists in other repositories on the platform. A WIRED investigation has found more than a dozen GitHub projects linked to deepfake “porn” videos evading detection, extending access to code used for intimate image abuse and highlighting blind spots in the platform’s moderation efforts. WIRED is not naming the projects or websites to avoid amplifying the abuse. “It’s not easy to always remove something the moment it comes online,” says Henry Ajder, an AI adviser to tech companies like Meta and Adobe on the challenge of moderating open source material online. “At the same time, there were red flags that were pretty clear.” Implemented in June 2024, GitHub’s policy bans projects that are “designed for, encourage, promote, support, or suggest in any way the use of synthetic or manipulated media for the creation of nonconsensual intimate imagery or any content that would constitute misinformation or disinformation.” GitHub has disabled at least three repositories identified by WIRED in December 2024 and is clearly taking action on abusive code. But others have popped up elsewhere on the platform, including some with near-identical branding or clear descriptors as “NSFW,” “unlocked” versions, or “bypasses.” GitHub did not respond to a request for comment. One project identified by WIRED in December 2024 had branding almost identical to a major project—self-described as the “leading software for creating deepfakes”—which GitHub disabled for several months last year for violating its terms of service. GitHub has also since disabled this additional version. “It wasn’t hiding,” says Ajder. “It wasn’t particularly subtle.” However, an archived version of the major repository is still available, and at least six other repositories based on the model were present on GitHub as of January 10, including another branded almost identically. All of the GitHub projects found by WIRED were at least partially built on code linked to videos on the deepfake porn streaming site. The repositories exist as part of a web of open source software across the web that can be used to make deepfake porn but by its open nature cannot be gate-kept. GitHub repos can be copied, known as a “fork,” and from there tailored freely by developers. “When we look at intimate image abuse, the vast majority of tools and weaponized use have come from the open source space,” says Ajder. But they often start with well-meaning developers, he says. “Someone creates something they think is interesting or cool and someone with bad intentions recognizes its malicious potential and weaponizes it.” Some, like the repository disabled in August, have purpose-built communities around them for explicit uses. The model positioned itself as a tool for deepfake porn, claims Ajder, becoming a “funnel” for abuse, which predominantly targets women. Other videos uploaded to the porn-streaming site by an account crediting AI models downloaded from GitHub featured the faces of popular deepfake targets, celebrities Emma Watson, Taylor Swift, and Anya Taylor-Joy, as well as other less famous but very much real women, superimposed into sexual situations. The creators freely described the tools they used, including two scrubbed by GitHub but whose code survives in other existing repositories. Perpetrators on the prowl for deepfakes congregate in many places online, including in covert community forums on Discord and in plain sight on Reddit, compounding deepfake prevention attempts. One Redditor offered their services using the archived repository’s software on September 29. “Could someone do my cousin,” another asked. Torrents of the main repository banned by GitHub in August are also available in other corners of the web, showing how difficult it is to police open-source deepfake software across the board

Security Ethics Deepfakes Pornography Github Open Source AI Abuse Intimate Image Moderation Ethics

United States Latest News, United States Headlines

Similar News:You can also read news stories similar to this one that we have collected from other news sources.

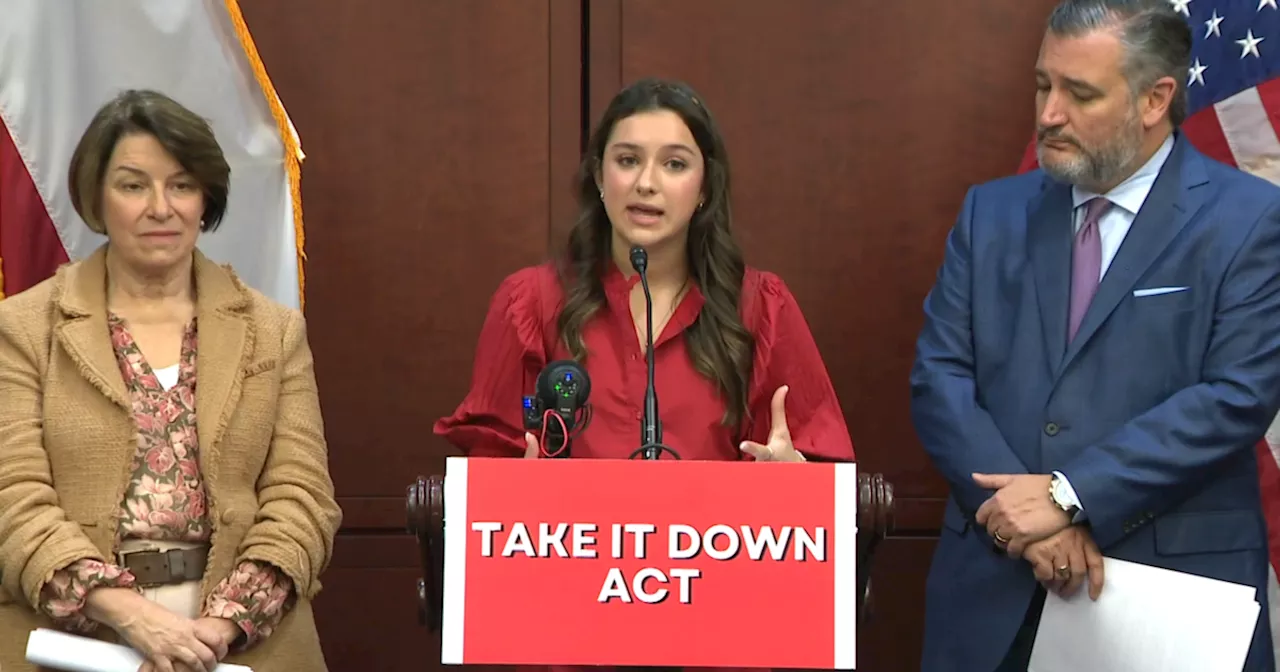

Bipartisan Bill Targets Deepfakes and Revenge PornSenators Cruz and Klobuchar are pushing for swift passage of the Take it Down Act to combat the spread of deepfake content and revenge porn.

Bipartisan Bill Targets Deepfakes and Revenge PornSenators Cruz and Klobuchar are pushing for swift passage of the Take it Down Act to combat the spread of deepfake content and revenge porn.

Read more »

Teen victim of AI-generated 'deepfake pornography' urges Congress to pass 'Take It Down Act'Elliston Berry's life was turned upside down after a photo she posted on Instagram was digitally altered online to be pornographic.

Teen victim of AI-generated 'deepfake pornography' urges Congress to pass 'Take It Down Act'Elliston Berry's life was turned upside down after a photo she posted on Instagram was digitally altered online to be pornographic.

Read more »

The Capture: A Deep Dive into Surveillance and Deepfake TechnologyThe BBC series The Capture, streaming on Peacock, explores the unsettling world of surveillance and deepfake technology. The show follows Detective Rachel Carey as she investigates a case involving a former soldier accused of kidnapping, only to uncover a vast conspiracy surrounding the manipulation of video and audio through 'Corrections'. As the series progresses, the stakes escalate with the use of deepfakes against a British politician, highlighting the potential for political manipulation and geopolitical ramifications.

The Capture: A Deep Dive into Surveillance and Deepfake TechnologyThe BBC series The Capture, streaming on Peacock, explores the unsettling world of surveillance and deepfake technology. The show follows Detective Rachel Carey as she investigates a case involving a former soldier accused of kidnapping, only to uncover a vast conspiracy surrounding the manipulation of video and audio through 'Corrections'. As the series progresses, the stakes escalate with the use of deepfakes against a British politician, highlighting the potential for political manipulation and geopolitical ramifications.

Read more »

Man Jailed for Creating Deepfake Porn of Ex-Wife and Other WomenA UK man was sentenced to prison for creating and distributing deepfake pornographic images of his ex-wife and other women. The victims, including two teachers, have been deeply impacted by the ordeal, suffering humiliation, fear, and damage to their professional reputations.

Man Jailed for Creating Deepfake Porn of Ex-Wife and Other WomenA UK man was sentenced to prison for creating and distributing deepfake pornographic images of his ex-wife and other women. The victims, including two teachers, have been deeply impacted by the ordeal, suffering humiliation, fear, and damage to their professional reputations.

Read more »

Deepfake Video Shows Queen Camilla Claiming to Be TransgenderA viral video circulating online falsely portrays Queen Camilla, wife of King Charles III, stating she is transgender. The clip is a manipulated version of a message she delivered on UK Armed Forces Day, where she makes no mention of gender identity.

Deepfake Video Shows Queen Camilla Claiming to Be TransgenderA viral video circulating online falsely portrays Queen Camilla, wife of King Charles III, stating she is transgender. The clip is a manipulated version of a message she delivered on UK Armed Forces Day, where she makes no mention of gender identity.

Read more »

Alien: Romulus Director Addresses Controversial Deepfake ScenesFede Álvarez's Alien: Romulus sparked debate for its use of a deepfake recreation of deceased actor Ian Holm. The director acknowledges the criticism and made adjustments to the scenes featuring Rook, the android portrayed by Holm's likeness, for the Blu-ray release. However, the changes are primarily limited to obscuring the effects work rather than a complete overhaul.

Alien: Romulus Director Addresses Controversial Deepfake ScenesFede Álvarez's Alien: Romulus sparked debate for its use of a deepfake recreation of deceased actor Ian Holm. The director acknowledges the criticism and made adjustments to the scenes featuring Rook, the android portrayed by Holm's likeness, for the Blu-ray release. However, the changes are primarily limited to obscuring the effects work rather than a complete overhaul.

Read more »