This article explores the unsettling feeling many people experience when encountering AI language models (LLMs) that seem too human-like in their responses. It delves into the psychological reasons behind this unease, examining how our own cognitive wiring and the uncanny valley effect contribute to the perception of LLMs as both fascinating and disturbing.

Both humans and LLMs are pattern-matchers, blurring the boundary between real and artificial thought. Are LLMs creepy? That odd feeling—the moment an AI-generated response is too human, too intuitive—has made many, including me, pause. The experience is both fascinating and disconcerting. How can a machine seem so perceptive and yet be entirely empty of thought? The answer isn’t simple, because the creep factor isn’t in the technology itself—it’s in us.

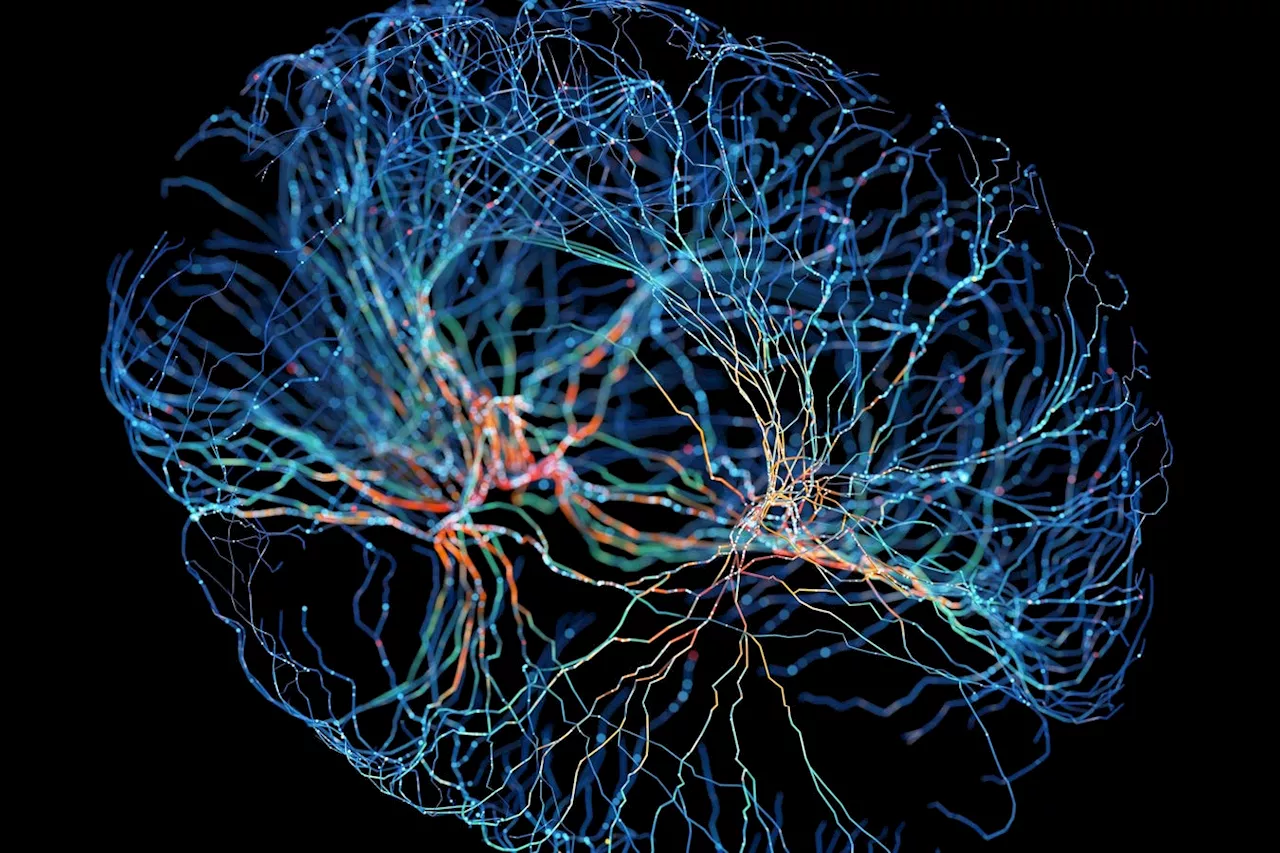

In robotics—the closer an android looks to being human, the more unsettling it becomes, until it reaches near-perfect realism. But what about an uncanny valley of thought? LLMs exist in this psychological gray zone. They aren’t human, yet they produce text that sounds deeply intelligent. They don’t think, yet they generate ideas that feel as though they were formed with intent. Their words have rhythm, and coherence, yet they lack the lived experience to anchor them in reality. This in-between state—more than a simple tool, less than a conscious mind—is what makes them feel strange. Part of what makes AI feel eerie is our own cognitive wiring. Humans are meaning-makers. We see faces in clouds, hear voices in white noise, and assign meaning to inanimate objects. When an LLM responds in a way that resonates, it’s hard not to assign some level of agency to it. Interestingly, this tendency aligns with how LLMs function. At their core, LLMs are also pattern recognizers. Just as we derive meaning from incomplete information—turning scattered dots into constellations or random sounds into familiar voices—LLMs generate responses by predicting the next statistically likely word based on patterns in vast datasets. This similarity between human and machine cognition is unsettling precisely because it evokes a sense of sentience where none truly exists. When an LLM mirrors our emotions, it feels like empathy. But the reality is both simpler and more complex. LLMs don’t intend anything in the way humans do. They predict, based on vast amounts of training data, what the most likely next word should be. Yet, beyond mere prediction, they engage in forms of structured inference—identifying relationships, synthesizing patterns, and making connections that often feel remarkably insightful. From these emergent properties, something like intelligence arises, creating an illusion of thought and intent that is difficult to dismiss outright. They Know Too Much… and Not Enough They can summarize dense academic papers, draft legal documents, and generate poetry in the style of Yeats. They appear to have deep knowledge because they have absorbed massive datasets of human language. But the moment they encounter a question that requires true comprehension, things can unravel. If you ask an LLM about the best place to bury a loved one, it might cite legal regulations about gravesites. It won't understand the emotional significance of the question—the grief that isn't buried. But an LLM? It might confidently state a legal jurisdiction for burial, missing the point entirely. This paradox makes them eerie. They are brilliant at synthesis but fail at understanding. They have memorized the words of the world, but not the world's wisdom. Perhaps the most unsettling thing about LLMs isn’t what they say—it’s what they reveal about us. LLMs are trained on human language. They don’t create, they remix. They don’t think, they mirror. When they say something profound, it’s because our collective intelligence is profound. When they generate something dark, it’s because we’ve fed them darkness. They are a reflection of human thought, unfiltered and exposed, compressed into a form we don’t quite understand. And when we ask them questions—about life, meaning, consciousness—we aren’t really asking them. Our unease isn’t limited to text-based AI. The introduction of hyper-realistic voice models and deepfake images has amplified this phenomenon. AI-generated voices can now replicate human intonation, pauses, and even emotional inflections with unsettling accuracy. The boundary between real and artificial speech is blurring, making it increasingly difficult to distinguish between a human and a synthetic voice over the phone or in media. Likewise, deepfake technology has given rise to hyper-realistic digital faces that can speak, emote, and react in ways that mimic human. These synthetic figures trigger the same discomfort as LLMs—especially when they exist just on the edge of believability. When combined with LLM-generated dialogue, these technologies create an uncanny simulacrum of human interaction that raises fundamental questions about trust, authenticity, and the very nature of our social reality. It’s easy to dismiss AI as just another tool, but that misses the point. AI isn’t just changing how we think—it’s changing our relationship to thinking itself. It forces us to ask uncomfortable questions: What is intelligence? What is intent? What is understanding? And most unsettling of all, if LLMs can generate thoughts without a thinker, where does that leave us?

Artificial Intelligence Artificial Intelligence Llms AI Ethics Cognitive Bias Uncanny Valley Technology Impact

United States Latest News, United States Headlines

Similar News:You can also read news stories similar to this one that we have collected from other news sources.

LLMs: Revolutionizing Patient Education and Bridging Health DisparitiesThis article explores how Large Language Models (LLMs) are poised to transform patient education by providing personalized, accessible, and culturally sensitive learning experiences. It discusses the limitations of traditional patient education materials and how LLMs can address these challenges, fostering patient engagement, building trust, and ultimately improving health outcomes.

LLMs: Revolutionizing Patient Education and Bridging Health DisparitiesThis article explores how Large Language Models (LLMs) are poised to transform patient education by providing personalized, accessible, and culturally sensitive learning experiences. It discusses the limitations of traditional patient education materials and how LLMs can address these challenges, fostering patient engagement, building trust, and ultimately improving health outcomes.

Read more »

Here’s How Big LLMs Teach Smaller AI Models Via Leveraging Knowledge DistillationAI-driven knowledge distillation is gaining attention. LLMs are teaching SLMs. Expect this trend to increase. Here's the insider scoop.

Here’s How Big LLMs Teach Smaller AI Models Via Leveraging Knowledge DistillationAI-driven knowledge distillation is gaining attention. LLMs are teaching SLMs. Expect this trend to increase. Here's the insider scoop.

Read more »

ChatGPT-4 Shows Promise but LLMs Still Pose Risks in RheumatologyA new study compares the performance of three large language models (LLMs) - ChatGPT-4, Claude 3 Opus, and Gemini Advanced - in answering rheumatology questions. While ChatGPT-4 demonstrates higher accuracy and quality, over 70% of incorrect answers from all three models had the potential to cause harm.

ChatGPT-4 Shows Promise but LLMs Still Pose Risks in RheumatologyA new study compares the performance of three large language models (LLMs) - ChatGPT-4, Claude 3 Opus, and Gemini Advanced - in answering rheumatology questions. While ChatGPT-4 demonstrates higher accuracy and quality, over 70% of incorrect answers from all three models had the potential to cause harm.

Read more »

Understanding the Complexity of AI: Beyond LLMsThis article explores the current state of AI development, focusing on the limitations of current large language models (LLMs) and the need for a more sophisticated approach inspired by the human mind. Drawing on insights from renowned computer scientist Yann LeCun, it highlights the importance of persistent memory, context understanding, and a 'society of mind' architecture for truly intelligent AI.

Understanding the Complexity of AI: Beyond LLMsThis article explores the current state of AI development, focusing on the limitations of current large language models (LLMs) and the need for a more sophisticated approach inspired by the human mind. Drawing on insights from renowned computer scientist Yann LeCun, it highlights the importance of persistent memory, context understanding, and a 'society of mind' architecture for truly intelligent AI.

Read more »

AI Agents Poised to Replace LLMs as the Next Big ThingLeading AI labs predict a shift from large language models (LLMs) to AI agents by 2025 due to advancements in the field and the emergence of open-source alternatives. AI agents, capable of autonomously performing tasks, are expected to become the dominant force in AI, surpassing the utility of LLMs.

AI Agents Poised to Replace LLMs as the Next Big ThingLeading AI labs predict a shift from large language models (LLMs) to AI agents by 2025 due to advancements in the field and the emergence of open-source alternatives. AI agents, capable of autonomously performing tasks, are expected to become the dominant force in AI, surpassing the utility of LLMs.

Read more »

AI Agents Set to Replace LLMs: Open-Source and Next-Gen Tech Drive the ShiftExperts predict that large language models (LLMs) like OpenAI's will become commoditized by 2025 due to advancements in AI agents and open-source technology. DeepSeek's R1 model, which surpasses OpenAI's o1 in cost and performance, exemplifies this trend. The shift towards agentic AI systems, capable of performing actions autonomously, is expected to transform how we interact with the web.

AI Agents Set to Replace LLMs: Open-Source and Next-Gen Tech Drive the ShiftExperts predict that large language models (LLMs) like OpenAI's will become commoditized by 2025 due to advancements in AI agents and open-source technology. DeepSeek's R1 model, which surpasses OpenAI's o1 in cost and performance, exemplifies this trend. The shift towards agentic AI systems, capable of performing actions autonomously, is expected to transform how we interact with the web.

Read more »