Apple and NVIDIA shared details of a collaboration to improve the performance of LLMs with a new text generation technique for AI.

Apple and NVIDIA shared details of a collaboration to improve the performance of LLMs with a new text generation technique for AI. Cupertino writes: Accelerating LLM inference is an important ML research problem, as auto-regressive token generation is computationally expensive and relatively slow, and improving inference efficiency can reduce latency for users.

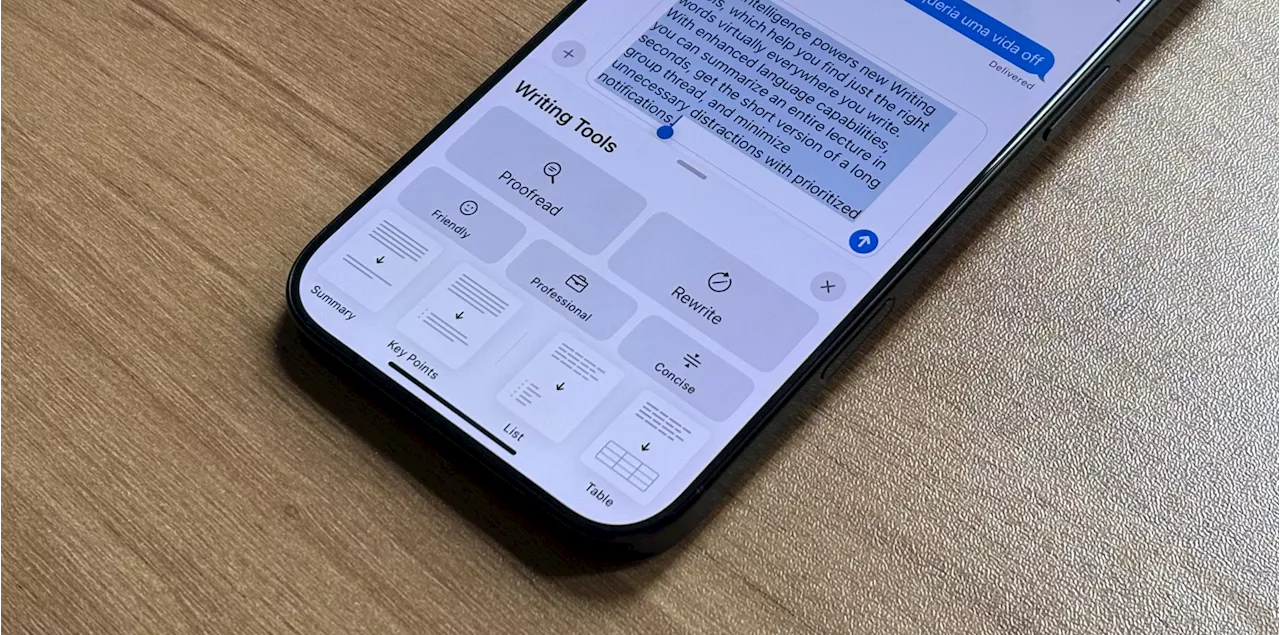

In addition to ongoing efforts to accelerate inference on Apple silicon, we have recently made significant progress in accelerating LLM inference for the NVIDIA GPUs widely used for production applications across the industry. Earlier this year, Apple published and open-sourced Recurrent Drafter , which is a novel approach to speculative decoding that 'achieves state of the art performance.' According to the company, ReDrafter uses an RNN draft model, and combines beam search with dynamic tree attention to speed up LLM token generation by up to 3.5 tokens per generation step for open source models, surpassing the performance of prior speculative decoding techniques. 'In benchmarking a tens-of-billions parameter production model on NVIDIA GPUs, using the NVIDIA TensorRT-LLM inference acceleration framework with ReDrafter, we have seen 2.7x speed-up in generated tokens per second for greedy decoding,' Apple papers show. With that, this technology could signifanctly reduce latency users may experience, while also using fewer GPUs and consuming less power. This is especially useful as Apple keeps improving its Apple Intelligence platform. By offering faster and more accurated results, users will have a better experience when using Apple's AI tools. The company finishes its paper by saying ReDrafter can improve the experience with NVIDIA's GPUs: LLMs are increasingly being used to power production applications, and improving inference efficiency can both impact computational costs and reduce latency for users. With ReDrafter’s novel approach to speculative decoding integrated into the NVIDIA TensorRT-LLM framework, developers can now benefit from faster token generation on NVIDIA GPUs for their production LLM applications. If you're a developer and want to use the new ReDrafter tool, you can find detailed information on both Apple's website and NVIDIA's developer blog.

United States Latest News, United States Headlines

Similar News:You can also read news stories similar to this one that we have collected from other news sources.

Stretching From LLMs To LGMs: Intelligence And The Amazing Promise Of Large Geospatial ModelsDr. Lance B. Eliot is a world-renowned AI scientist and consultant with over 8.1+ million amassed views of his AI columns and been featured on CBS 60 Minutes. As a CIO/CTO seasoned executive and high-tech entrepreneur, he combines practical industry experience with deep academic research.

Stretching From LLMs To LGMs: Intelligence And The Amazing Promise Of Large Geospatial ModelsDr. Lance B. Eliot is a world-renowned AI scientist and consultant with over 8.1+ million amassed views of his AI columns and been featured on CBS 60 Minutes. As a CIO/CTO seasoned executive and high-tech entrepreneur, he combines practical industry experience with deep academic research.

Read more »

LLMs: The timeless role of the scribe meets the transformative power of AI.LLMs are modern scribes—dynamic collaborators that transform scattered ideas into refined expression, reshaping how we think, create, and solve problems.

LLMs: The timeless role of the scribe meets the transformative power of AI.LLMs are modern scribes—dynamic collaborators that transform scattered ideas into refined expression, reshaping how we think, create, and solve problems.

Read more »

How AI and LLMs are changing the way we "think" about being smart.Technology Quotient (TQ) redefines intelligence, showing how we think, create, and learn with AI as a collaborative partner.

How AI and LLMs are changing the way we "think" about being smart.Technology Quotient (TQ) redefines intelligence, showing how we think, create, and learn with AI as a collaborative partner.

Read more »

Orange Partners With OpenAI To Make LLMs More Inclusive In AfricaMeghan McCormick is a Ghana-based entrepreneur covering women building high-impact organizations across the African continent. She's written about Ghana's successful 'Year of Return', entrepreneurs moving to Africa to launch businesses, and women leading the continent's burgeoning tech scene.

Orange Partners With OpenAI To Make LLMs More Inclusive In AfricaMeghan McCormick is a Ghana-based entrepreneur covering women building high-impact organizations across the African continent. She's written about Ghana's successful 'Year of Return', entrepreneurs moving to Africa to launch businesses, and women leading the continent's burgeoning tech scene.

Read more »

From creativity to problem-solving, LLMs sharpen thinking—no caffeine needed.LLMs are reshaping cognitive optimization, enhancing how we learn, solve problems, and engage with ideas.

From creativity to problem-solving, LLMs sharpen thinking—no caffeine needed.LLMs are reshaping cognitive optimization, enhancing how we learn, solve problems, and engage with ideas.

Read more »

LLMs beat human neuroscience experts in predicting study outcomes.New study shows AI large language models (LLMs) outperform human neuroscientists in predicting neuroscience study outcomes.

LLMs beat human neuroscience experts in predicting study outcomes.New study shows AI large language models (LLMs) outperform human neuroscientists in predicting neuroscience study outcomes.

Read more »