Explore a novel approach to improve multimodal meme sentiment classification by supplementing training with unimodal sentiment data.

Authors: Muzhaffar Hazman, University of Galway, Ireland; Susan McKeever, Technological University Dublin, Ireland; Josephine Griffith, University of Galway, Ireland. Table of Links Abstract and Introduction Related Works Methodology Results Limitations and Future Works Conclusion, Acknowledgments, and References A Hyperparameters and Settings B Metric: Weighted F1-Score C Architectural Details D Performance Benchmarking E Contingency Table: Baseline vs.

Text-STILT Abstract Internet Memes remain a challenging form of user-generated content for automated sentiment classification. The availability of labelled memes is a barrier to developing sentiment classifiers of multimodal memes. To address the shortage of labelled memes, we propose to supplement the training of a multimodal meme classifier with unimodal data. In this work, we present a novel variant of supervised intermediate training that uses relatively abundant sentiment-labelled unimodal data. Our results show a statistically significant performance improvement from the incorporation of unimodal text data. Furthermore, we show that the training set of labelled memes can be reduced by 40% without reducing the performance of the downstream model. 1 Introduction As Internet Memes become increasingly popular and commonplace across digital communities worldwide, research interest to extend natural language classification tasks, such as sentiment classification, hate speech detection, and sarcasm detection, to these multimodal units of expression has increased. However, state-of-the art multimodal meme sentiment classifiers significantly underperform contemporary text sentiment classifiers and image sentiment classifiers. Without accurate and reliable methods to identify the sentiment of multimodal memes, social media sentiment analysis methods must either ignore or inaccurately infer opinions expressed via memes. As memes continue to be a mainstay in online discourse, our ability to infer the meaning they convey becomes increasingly pertinent . Achieving similar levels of sentiment classification performance on memes as on unimodal content remains a challenge. In addition to its multimodal nature, multimodal meme classifiers must discern sentiment from culturally specific inputs that comprise brief texts, cultural references, and visual symbolism . Although various approaches have been used to extract information from each modality recent works have highlighted that meme classifiers must also recognise the various forms of interactions between these two modalities . Current approaches to training meme classifiers are dependent on datasets of labelled memes containing sufficient samples to train classifiers to extract relevant features from each modality and relevant cross-modal interactions. Relative to the complexity of the task, the current availability of labelled memes still poses a problem, as many current works call for more data . Worse still, memes are hard to label. The complexity and culture dependence of memes cause the Subjective Perception Problem , where varying familiarity and emotional reaction to the contents of a meme from each annotator causes different ground-truth labels. Second, memes often contain copyright-protected visual elements taken from other popular media , raising concerns when publishing datasets. This required Kiela et al. to manually reconstruct each meme in their dataset using licenced images, significantly increasing the annotation effort. Furthermore, the visual elements that comprise a given meme often emerge as a sudden trend that rapidly spreads through online communities , quickly introducing new semantically rich visual symbols into the common meme parlance, which carried little meaning before . Taken together, these characteristics make the labelling of memes particularly challenging and costly. In seeking more data-efficient methods to train meme sentiment classifiers, our work attempts to leverage the relatively abundant unimodal sentiment-labelled data, i.e. sentiment analysis datasets with image-only and text-only samples. We do so using Phang et al.’s Supplementary Training on Intermediate Labeleddata Tasks which addresses the low performance often encountered when finetuning pretrained text encoders to data-scarce Natural Language Understanding tasks. Phang et al.’s STILT approach entails three steps: 1. Load pretrained weights into a classifier model. 2. Finetune the model on a supervised learning task for which data is easily available . 3. Finetune the model on a data-scarce task that is distinct to the intermediate task. STILT has been shown to improve the performance of various models in a variety of text-only target tasks . Furthermore, Pruksachatkun et al. observed that STILT is particularly effective in target tasks in NLU with smaller datasets, e.g. WiC and BoolQ . However, they also showed that the performance benefits of this approach are inconsistent and depend on choosing appropriate intermediate tasks for any given target task. In some cases, intermediate training was found to be detrimental to target task performance; which Pruksachatkun et al. attributed to differences between the required “syntactic and semantic skills” needed for each intermediate and target task pair. However, STILT has not yet been tested in a configuration in which intermediate and target tasks have different input modalities. Although only considering the text or image of a meme in isolation does not convey its entire meaning , we suspect that unimodal sentiment data may help incorporate skills relevant to discern the sentiment of memes. By proposing a novel variant of STILT that uses unimodal sentiment analysis data as an intermediate task in training a multimodal meme sentiment classifier, we answer the following questions: : Does supplementing the training of a multimodal meme classifier with unimodal sentiment data significantly improve its performance? RQ1 We separately tested our proposed approach with image-only and text-only 3-class sentiment data as illustrated in Figure 1). If either proves effective, we additionally answer: Image-STILT Text-STILT : With unimodal STILT, to what extent can we reduce the amount of labelled memes whilst preserving the performance of a meme sentiment classifier? RQ2 This paper is under CC 4.0 license. available on arxiv

United States Latest News, United States Headlines

Similar News:You can also read news stories similar to this one that we have collected from other news sources.

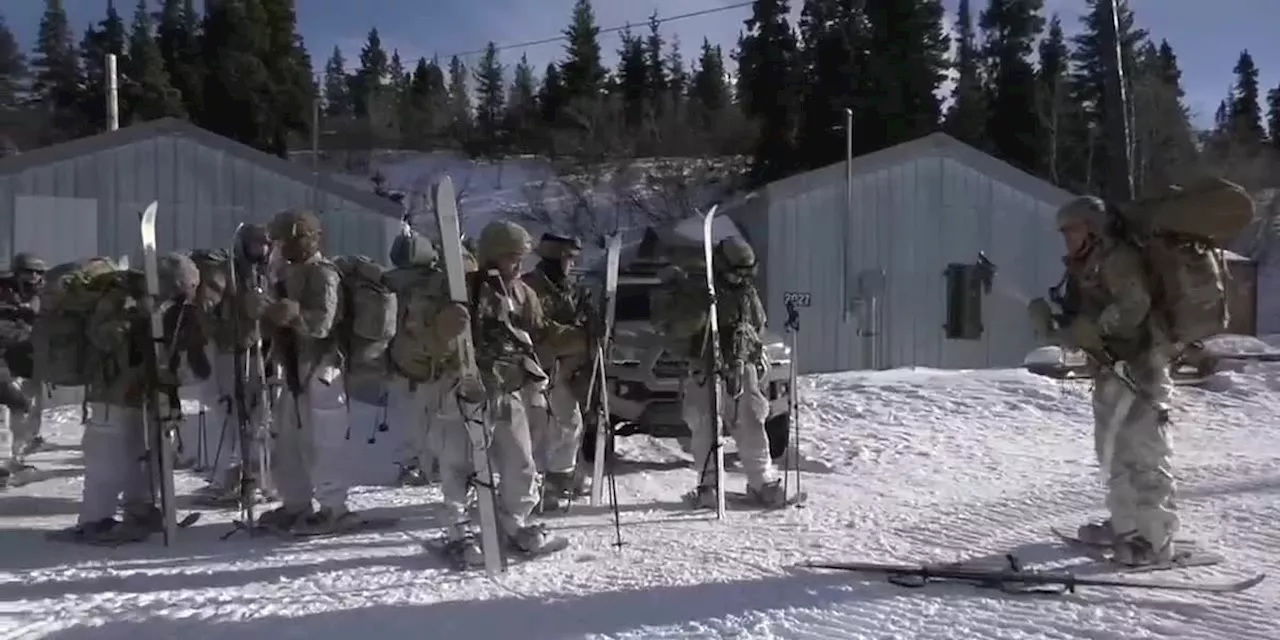

Military Report: Arctic training in the Alaska Range at the Northern Warfare Training CenterThe 11th Airborne is working to make all of their leaders top notch arctic soldiers by sending them to the Northern Warfare training Center.

Military Report: Arctic training in the Alaska Range at the Northern Warfare Training CenterThe 11th Airborne is working to make all of their leaders top notch arctic soldiers by sending them to the Northern Warfare training Center.

Read more »

Reps. Lee, Swalwell celebrate $30m in federal funds for multimodal projectU.S. Reps. Barbara Lee, D-Oakland, and Eric Swalwell, D-Castro Valley, celebrated on Friday after receiving $30 million in federal funding from the U.S. Department of Transportation to install about 10 miles of street improvements along the project corridor, which will span from Lake Merritt to Bayfair.

Reps. Lee, Swalwell celebrate $30m in federal funds for multimodal projectU.S. Reps. Barbara Lee, D-Oakland, and Eric Swalwell, D-Castro Valley, celebrated on Friday after receiving $30 million in federal funding from the U.S. Department of Transportation to install about 10 miles of street improvements along the project corridor, which will span from Lake Merritt to Bayfair.

Read more »

Casey, McCormick to appear alone on Senate ballots in Pennsylvania after courts boot off challengersThe new training program is providing election administration training for election officials across the Commonwealth.

Casey, McCormick to appear alone on Senate ballots in Pennsylvania after courts boot off challengersThe new training program is providing election administration training for election officials across the Commonwealth.

Read more »

Polling places inside synagogues are being moved for Pennsylvania's April primary during PassoverThe new training program is providing election administration training for election officials across the Commonwealth.

Polling places inside synagogues are being moved for Pennsylvania's April primary during PassoverThe new training program is providing election administration training for election officials across the Commonwealth.

Read more »

Pa. Dems unveil new school safety legislation, named after victim of Parkland school shootingThe new training program is providing election administration training for election officials across the Commonwealth.

Pa. Dems unveil new school safety legislation, named after victim of Parkland school shootingThe new training program is providing election administration training for election officials across the Commonwealth.

Read more »

BTS’s Suga Enters Army Training Center + To Resume Service After Receiving Basic Military TrainingGray, Woo Won Jae, Lee Hi, and GooseBumps have parted ways with AOMG. On March 28, AOMG released a statement on their official social media accounts announcing the expiration of the exclusive contracts with their artists Gray, Woo Won Jae, Lee Hi, and GooseBumps. Read the full statement below: Hello, this is AOMG.

BTS’s Suga Enters Army Training Center + To Resume Service After Receiving Basic Military TrainingGray, Woo Won Jae, Lee Hi, and GooseBumps have parted ways with AOMG. On March 28, AOMG released a statement on their official social media accounts announcing the expiration of the exclusive contracts with their artists Gray, Woo Won Jae, Lee Hi, and GooseBumps. Read the full statement below: Hello, this is AOMG.

Read more »