Learn about data engineering strategies and efficient computation techniques for large-scale data processing.

Authors: Jonathan H. Rystrøm. Table of Links Abstract and Introduction Previous Literature Methods and Data Results Discussions Conclusions and References A. Validation of Assumptions B. Other Models C.

Pre-processing steps C Pre-processing steps Dealing with a dataset with millions of rows and complex types like ”categories” and ”dates” requires special engineering considerations. This section outlines the pre-processing steps required to get the data from Ni et al. in an analysis-ready shape. All pre-processing of the data was done using python . This is particularly because of the rich ecosystem of scientific packages. For this project we use numpy , pandas , and numba for efficient large-scale data processing. We also use scikit-learn to efficiently parse categories . Most computations were performed on the Oxford Internet Institute’s HPC cluster. This allowed us to benefit from multi-core processing and increased RAM. The first step is creating a dataset of category relevance for the books . Here, we simply take the original gzipped file and extract a list of categories and item ID . This drastically reduces the file size, so we can do the computations in memory. The next step is preparing the rating data. We start by filtering the dataset to only have users with more than 20 ratings. This reduces the dataset considerably as we saw in Fig. 2. We then left-join the data with the category similarity data described above. Each row now consists of a user id, category id, timestamp, and preference score for each rating that the user has made for any given category. Note, that each individual rating can be represented in multiple rows if a book has multiple categories . Finally, we summarise the data to get the sum of preference scores and amount of ratings per user, category, and quarter. This gives us a further reduced dataset that is more manageable to work with. Revealed preferences are defined as the weighted sum of ratings and category relevance , so this decision is mainly one of granularity. This paper is under CC 4.0 license. available on arxiv

United States Latest News, United States Headlines

Similar News:You can also read news stories similar to this one that we have collected from other news sources.

Using Autodiff to Estimate Posterior Moments, Marginals and Samples: Experimental Datasets and ModelImportance weighting allows us to reweight samples drawn from a proposal in order to compute expectations of a different distribution.

Using Autodiff to Estimate Posterior Moments, Marginals and Samples: Experimental Datasets and ModelImportance weighting allows us to reweight samples drawn from a proposal in order to compute expectations of a different distribution.

Read more »

The biggest AI companies agree to crack down on child abuse imagesCompanies like Amazon, Google, Meta, Microsoft, and OpenAI commit to a set of principles that aims to remove and avoid problematic images in datasets to train AI models.

The biggest AI companies agree to crack down on child abuse imagesCompanies like Amazon, Google, Meta, Microsoft, and OpenAI commit to a set of principles that aims to remove and avoid problematic images in datasets to train AI models.

Read more »

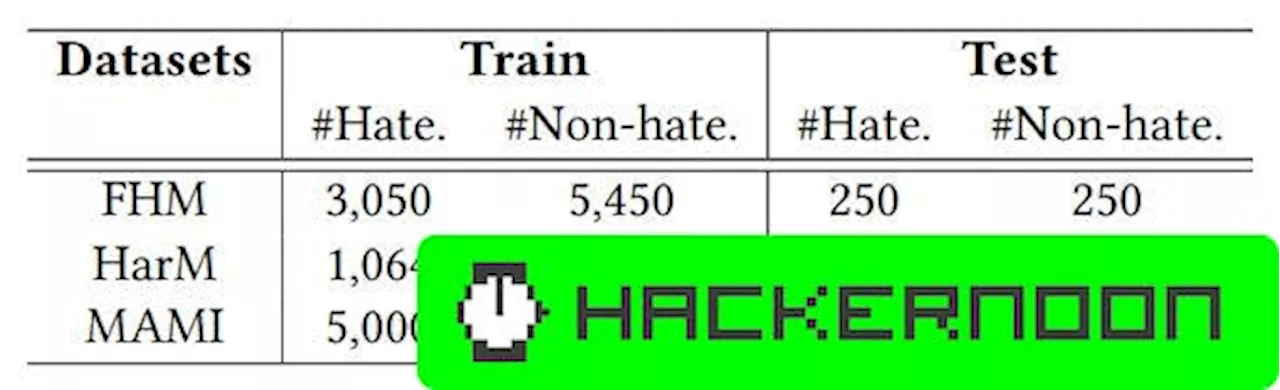

Performance Analysis of Diverse Hateful Meme Detection DatasetsDelve into the evaluation and analysis of a probing-based approach for detecting hateful memes.

Performance Analysis of Diverse Hateful Meme Detection DatasetsDelve into the evaluation and analysis of a probing-based approach for detecting hateful memes.

Read more »

Amazon’s Ali Kole Talks the Multifaceted Amazon ShopperAfter signing Clinique, Amazon Premium Beauty outlines the various purchasing behaviors motivating its consumers.

Amazon’s Ali Kole Talks the Multifaceted Amazon ShopperAfter signing Clinique, Amazon Premium Beauty outlines the various purchasing behaviors motivating its consumers.

Read more »

Early deals we're seeing ahead of Amazon Pet DayYou've heard of Amazon Prime Day, but have you heard of Amazon Pet Day?

Early deals we're seeing ahead of Amazon Pet DayYou've heard of Amazon Prime Day, but have you heard of Amazon Pet Day?

Read more »

Amazon Prime Day 2024: Amazon confirms the shopping holiday for JulyAmazon has confirmed that there will be a Prime Day in July. Here's everything you need to know to prepare for the massive shopping holiday.

Amazon Prime Day 2024: Amazon confirms the shopping holiday for JulyAmazon has confirmed that there will be a Prime Day in July. Here's everything you need to know to prepare for the massive shopping holiday.

Read more »