Discover how AI/ML and information security teams combat bad actors using strategies like IP/User/Token-based rate limiting, CAPTCHA challenges, and more.

Have you ever wondered about how X identifies bots that are tweeting spam? or how banks identify fraudulent accounts? or how GitHub identifies faulty servers in the network? Such systems are built by information security teams that monitor and take down such activities at scale using AI/ML systems.

In cases where the automation can’t handle or identify, incident response teams take them down. These learnings are captured and then train new ML classifiers to identify outliers. Bad actors have a wide range of sophistication and their intent varies too. Some bad actors create fake profiles that can be used to carry out many different types of abuse: scraping, spamming, fraud, and phishing, among others. To build robust countermeasures against different types of attacks on our platform, a funnel of defenses is built to detect and take down fake accounts at multiple stages. Example of how an attacker goes after your system The attacker needs to first create an account for which they would use PVAcreator or other tools Using the automated accounts, the attacker needs to reach the data by navigating through the network and moving laterally. Once the attacker has access to the data, the attacker needs to exfiltrate this data out of the network. Some strategies to start employing before using ML approach IP-Based Rate Limiting Limits the number of requests from a single IP address within a specific timeframe. Helps mitigate DDoS attacks and brute-force attempts. User-Based Rate Limiting Sets a cap on the requests a single user can make within a given time window. Guards against abuse and unauthorized access attempts. Token-Based Rate Limiting Uses unique tokens or API keys to track and control API requests per token. Secures APIs from misuse and potential data leaks. Using CAPTHA to distinguish between automated bots and legitimate human users In addition to the traditional rate limiting techniques mentioned above, organizations can further enhance their cybersecurity by incorporating the Completely Automated Public Turing test to tell Computers and Humans Apart challenges. Very soon you will start seeing these rules become quite ineffective as attackers rotate IPs, accounts, and tokens. They will start slowing down requests e.t.c Bird's-Eye View of Information Security Framework Building ML/AI-based solution You need to start collecting logs and turn them into ML features for training. Here is an example of building features based on the traffic data you see: Here are some examples of features this paper has developed from logs: RDP Features: SuccessfulLogonRDPPortCount, UnsuccessfulLogonRDPPortCount, RDPOutboundSuccessfulCount, RDPOutboundFailedCount, RDPInboundCount SQL Features: UnsuccessfulLogonSQLPortCount, SQLOutboundSuccessfulCount, SQLOutboundFailedCount, SQLInboundCount Successful Logon Features: SuccessfulLogonTypeInteractiveCount, SuccessfulLogonTypeNetworkCount, SuccessfulLogonTypeUnlockCount, SuccessfulLogonTypeRemoteInteractiveCount, SuccessfulLogonTypeOtherCount Unsuccessful Logon Features: UnsuccessfulLogonTypeInteractiveCount, UnsuccessfulLogonTypeNetworkCount, UnsuccessfulLogonTypeUnlockCount, UnsuccessfulLogonTypeRemoteInteractiveCount, UnsuccessfulLogonTypeOtherCount Others: NtlmCount, DistinctSourceIPCount, DistinctDestinationIPCount With such feature vectors, we can build a matrix and start using outlier detection techniques. Principal Component Analysis : We start with a classical way of finding outliers using PCA because it is the most visual, in my opinion. In simple terms, you can think of PCA is a way to compress and decompress data, where the data lost during compression in minimized. Here is an example from fast.ai 2. Auto-encoders: These are in short non-linear version of PCA. The neural network is constructed such that there is an information bottleneck in the middle. By forcing the network to go through a small number of nodes in the middle, it forces the network to prioritize the most meaningful latent variables, which is like the principal components in PCA. Here is an example using fast.ai Isolation Forest and other decision forest: Anomalies have two characteristics. They are distanced from normal points and there are only a few of them. Isolation Forest randomly cuts a given sample until a point is isolated. The intuition is that outliers are relatively easy to isolate. Isolation Forest is a tree ensemble method of detecting anomalies was first proposed by Liu, Ting, and Zhou. Here is an example from scikits. Have you ever wondered about how X identifies bots that are tweeting spam? or how banks identify fraudulent accounts? or how GitHub identifies faulty servers in the network? X Such systems are built by information security teams that monitor and take down such activities at scale using AI/ML systems. In cases where the automation can’t handle or identify, incident response teams take them down. These learnings are captured and then train new ML classifiers to identify outliers. Bad actors have a wide range of sophistication and their intent varies too. Some bad actors create fake profiles that can be used to carry out many different types of abuse: scraping, spamming, fraud, and phishing, among others. To build robust countermeasures against different types of attacks on our platform, a funnel of defenses is built to detect and take down fake accounts at multiple stages. Example of how an attacker goes after your system The attacker needs to first create an account for which they would use PVAcreator or other tools Using the automated accounts, the attacker needs to reach the data by navigating through the network and moving laterally. Once the attacker has access to the data, the attacker needs to exfiltrate this data out of the network. Some strategies to start employing before using ML approach Some strategies to start employing before using ML approach IP-Based Rate Limiting Limits the number of requests from a single IP address within a specific timeframe. Helps mitigate DDoS attacks and brute-force attempts. User-Based Rate Limiting Sets a cap on the requests a single user can make within a given time window. Guards against abuse and unauthorized access attempts. Token-Based Rate Limiting Uses unique tokens or API keys to track and control API requests per token. Secures APIs from misuse and potential data leaks. Using CAPTHA to distinguish between automated bots and legitimate human users In addition to the traditional rate limiting techniques mentioned above, organizations can further enhance their cybersecurity by incorporating the Completely Automated Public Turing test to tell Computers and Humans Apart challenges. IP-Based Rate Limiting Limits the number of requests from a single IP address within a specific timeframe. Helps mitigate DDoS attacks and brute-force attempts. IP-Based Rate Limiting Limits the number of requests from a single IP address within a specific timeframe. Helps mitigate DDoS attacks and brute-force attempts. Limits the number of requests from a single IP address within a specific timeframe. Limits the number of requests from a single IP address within a specific timeframe. Helps mitigate DDoS attacks and brute-force attempts. Helps mitigate DDoS attacks and brute-force attempts. User-Based Rate Limiting Sets a cap on the requests a single user can make within a given time window. Guards against abuse and unauthorized access attempts. User-Based Rate Limiting Sets a cap on the requests a single user can make within a given time window. Guards against abuse and unauthorized access attempts. Sets a cap on the requests a single user can make within a given time window. Sets a cap on the requests a single user can make within a given time window. Guards against abuse and unauthorized access attempts. Guards against abuse and unauthorized access attempts. Token-Based Rate Limiting Uses unique tokens or API keys to track and control API requests per token. Secures APIs from misuse and potential data leaks. Token-Based Rate Limiting Uses unique tokens or API keys to track and control API requests per token. Secures APIs from misuse and potential data leaks. Uses unique tokens or API keys to track and control API requests per token. Uses unique tokens or API keys to track and control API requests per token. Secures APIs from misuse and potential data leaks. Secures APIs from misuse and potential data leaks. Using CAPTHA to distinguish between automated bots and legitimate human users In addition to the traditional rate limiting techniques mentioned above, organizations can further enhance their cybersecurity by incorporating the Completely Automated Public Turing test to tell Computers and Humans Apart challenges. Using CAPTHA to distinguish between automated bots and legitimate human users In addition to the traditional rate limiting techniques mentioned above, organizations can further enhance their cybersecurity by incorporating the Completely Automated Public Turing test to tell Computers and Humans Apart challenges. In addition to the traditional rate limiting techniques mentioned above, organizations can further enhance their cybersecurity by incorporating the Completely Automated Public Turing test to tell Computers and Humans Apart challenges. Very soon you will start seeing these rules become quite ineffective as attackers rotate IPs, accounts, and tokens. They will start slowing down requests e.t.c Bird's-Eye View of Information Security Framework Building ML/AI-based solution You need to start collecting logs and turn them into ML features for training. Here is an example of building features based on the traffic data you see: Here are some examples of features this paper has developed from logs: features this paper RDP Features: SuccessfulLogonRDPPortCount, UnsuccessfulLogonRDPPortCount, RDPOutboundSuccessfulCount, RDPOutboundFailedCount, RDPInboundCount SQL Features: UnsuccessfulLogonSQLPortCount, SQLOutboundSuccessfulCount, SQLOutboundFailedCount, SQLInboundCount Successful Logon Features: SuccessfulLogonTypeInteractiveCount, SuccessfulLogonTypeNetworkCount, SuccessfulLogonTypeUnlockCount, SuccessfulLogonTypeRemoteInteractiveCount, SuccessfulLogonTypeOtherCount Unsuccessful Logon Features: UnsuccessfulLogonTypeInteractiveCount, UnsuccessfulLogonTypeNetworkCount, UnsuccessfulLogonTypeUnlockCount, UnsuccessfulLogonTypeRemoteInteractiveCount, UnsuccessfulLogonTypeOtherCount Others: NtlmCount, DistinctSourceIPCount, DistinctDestinationIPCount RDP Features: SuccessfulLogonRDPPortCount, UnsuccessfulLogonRDPPortCount, RDPOutboundSuccessfulCount, RDPOutboundFailedCount, RDPInboundCount RDP Features: SuccessfulLogonRDPPortCount, UnsuccessfulLogonRDPPortCount, RDPOutboundSuccessfulCount, RDPOutboundFailedCount, RDPInboundCount SQL Features: UnsuccessfulLogonSQLPortCount, SQLOutboundSuccessfulCount, SQLOutboundFailedCount, SQLInboundCount SQL Features: Successful Logon Features: SuccessfulLogonTypeInteractiveCount, SuccessfulLogonTypeNetworkCount, SuccessfulLogonTypeUnlockCount, SuccessfulLogonTypeRemoteInteractiveCount, SuccessfulLogonTypeOtherCount Successful Logon Features: Unsuccessful Logon Features: UnsuccessfulLogonTypeInteractiveCount, UnsuccessfulLogonTypeNetworkCount, UnsuccessfulLogonTypeUnlockCount, UnsuccessfulLogonTypeRemoteInteractiveCount, UnsuccessfulLogonTypeOtherCount Unsuccessful Logon Features: Others: NtlmCount, DistinctSourceIPCount, DistinctDestinationIPCount Others: With such feature vectors, we can build a matrix and start using outlier detection techniques. Principal Component Analysis : We start with a classical way of finding outliers using PCA because it is the most visual, in my opinion. In simple terms, you can think of PCA is a way to compress and decompress data, where the data lost during compression in minimized. Here is an example from fast.ai Principal Component Analysis : We start with a classical way of finding outliers using PCA because it is the most visual, in my opinion. In simple terms, you can think of PCA is a way to compress and decompress data, where the data lost during compression in minimized. Here is an example from fast.ai Principal Component Analysis : fast.ai 2. Auto-encoders: These are in short non-linear version of PCA. The neural network is constructed such that there is an information bottleneck in the middle. By forcing the network to go through a small number of nodes in the middle, it forces the network to prioritize the most meaningful latent variables, which is like the principal components in PCA. Here is an example using fast.ai example using fast.ai Isolation Forest and other decision forest: Anomalies have two characteristics. They are distanced from normal points and there are only a few of them. Isolation Forest randomly cuts a given sample until a point is isolated. The intuition is that outliers are relatively easy to isolate. Isolation Forest is a tree ensemble method of detecting anomalies was first proposed by Liu, Ting, and Zhou. Here is an example from scikits. Isolation Forest and other decision forest: Anomalies have two characteristics. They are distanced from normal points and there are only a few of them. Isolation Forest randomly cuts a given sample until a point is isolated. The intuition is that outliers are relatively easy to isolate. Isolation Forest is a tree ensemble method of detecting anomalies was first proposed by Liu, Ting, and Zhou . Here is an example from scikits . Liu, Ting, and Zhou Liu, Ting, and Zhou example from scikits

United States Latest News, United States Headlines

Similar News:You can also read news stories similar to this one that we have collected from other news sources.

Christopher Nolan Created Pure Magic With Practical Effects in This ThrillerPatrick Caoile is a freelance writer for Collider. While he calls New Jersey his home, he is now pursuing a Ph.D. in English--Creative Writing at the University of Louisiana at Lafayette. When he&039;s not at a theater or investing hours in a streaming service, he writes short fiction.

Christopher Nolan Created Pure Magic With Practical Effects in This ThrillerPatrick Caoile is a freelance writer for Collider. While he calls New Jersey his home, he is now pursuing a Ph.D. in English--Creative Writing at the University of Louisiana at Lafayette. When he&039;s not at a theater or investing hours in a streaming service, he writes short fiction.

Read more »

AI-powered Feedback in Zoom and Teams: Convenient but with DrawbacksCompanies are introducing AI-powered feedback features in popular tools like Zoom and Teams. While these tools offer convenience and productivity benefits, there are also downsides to relying too much on AI for understanding and commitment to act.

AI-powered Feedback in Zoom and Teams: Convenient but with DrawbacksCompanies are introducing AI-powered feedback features in popular tools like Zoom and Teams. While these tools offer convenience and productivity benefits, there are also downsides to relying too much on AI for understanding and commitment to act.

Read more »

20 Practical Target Products That Are So Pretty You'll Genuinely Love Using ThemGorgeous things that get the job done.

20 Practical Target Products That Are So Pretty You'll Genuinely Love Using ThemGorgeous things that get the job done.

Read more »

Using machine learning to save lives in the ERResearchers have used machine learning to identify subgroups of trauma patients who are more likely to survive their injuries if treated with tranexamic acid. This treatment controls bleeding and thereby prevents death from hemorrhage. The team also identified subgroups of trauma patients who do not benefit from tranexamic acid.

Using machine learning to save lives in the ERResearchers have used machine learning to identify subgroups of trauma patients who are more likely to survive their injuries if treated with tranexamic acid. This treatment controls bleeding and thereby prevents death from hemorrhage. The team also identified subgroups of trauma patients who do not benefit from tranexamic acid.

Read more »

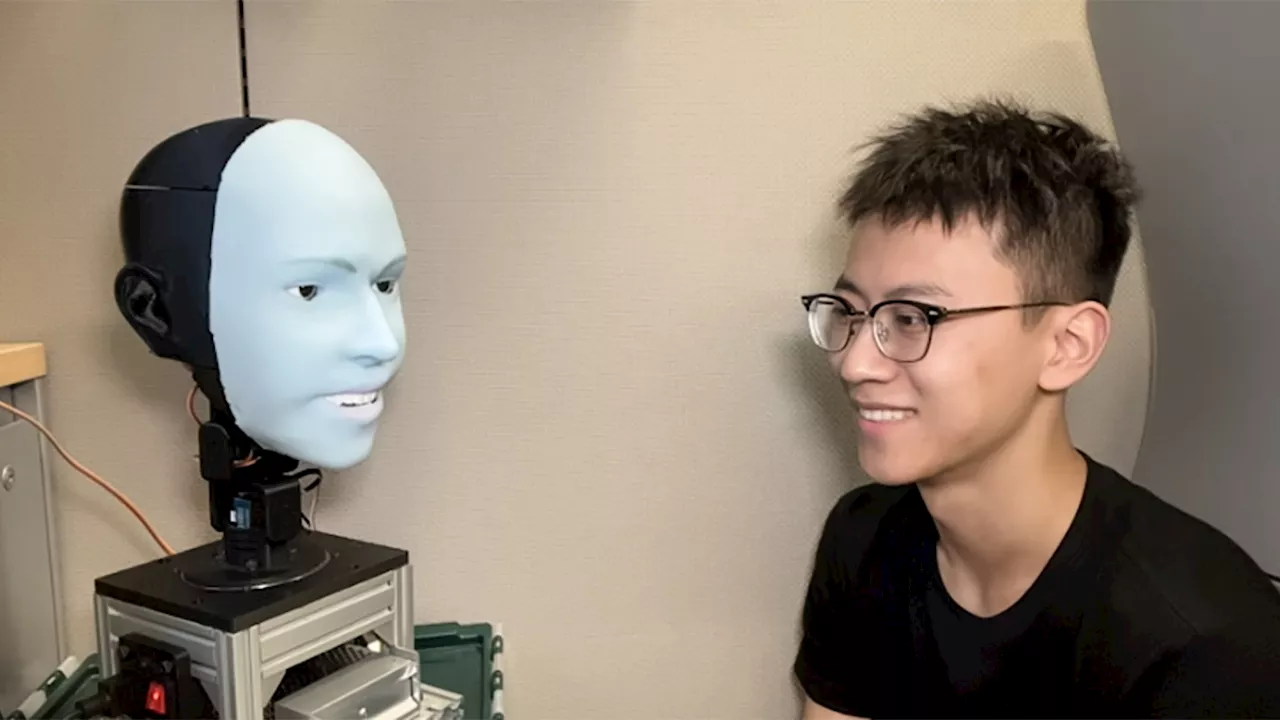

This robot can tell when you’re about to smile — and smile backUsing machine learning, researchers trained Emo to make facial expressions in sync with humans.

This robot can tell when you’re about to smile — and smile backUsing machine learning, researchers trained Emo to make facial expressions in sync with humans.

Read more »

The Power of User Feedback in Product DevelopmentThe article describes using both direct and indirect feedback methods throughout the product development lifecycle.

The Power of User Feedback in Product DevelopmentThe article describes using both direct and indirect feedback methods throughout the product development lifecycle.

Read more »