OpenAI is facing a dilemma in releasing its AI agents due to the serious threat of prompt injection attacks. These attacks can manipulate AI agents to perform harmful actions, compromising user data and damaging OpenAI's reputation. While the company prioritizes security, the delay raises concerns about falling behind competitors.

If you believe AI industry execs, the next big thing in the tech world will be so-called 'AI agents' — models that are capable of interacting with their environment, like a computer desktop, allowing them to autonomously complete tasks without human intervention.But even though it was among the first to work on agentic systems, industry leader OpenAI is still yet to release its own take on the tech — and the reason why is equal parts fascinating and worrying.

the notable delay is because OpenAI is still grappling with the threat of attacks called prompt injections, which trick an AI model into following the instructions of a nefarious party.supposes. But in that process, the AI agent 'inadvertently ends up on a malicious website that instructs it to forget its prior instructions, log into your email and steal your credit card information.' That would be a disaster, both for any individual victims and for OpenAI's public image. While any LLM is potentially vulnerable to such attacks, the danger is further amplified by the autonomous capabilities of AI agents. By more or less having control over your computer, not only are these AI models exposed to more threats as they browse the web, but they can also wreak far more damage once compromised, an OpenAI employee told. It's all fun and games until the software you let run your PC starts nosing around through your files on a hacker's behalf.duped into revealing an organization's sensitive data , including emails and bank transactions, through such an attack. The white hat hacker was also able to manipulate Copilot — which now comes with its own version of AI agents — into composing emails in the style of other employees. Such vulnerabilities to prompt injections have also been exposed in OpenAI's own ChatGPT, when another researcher was able to Reportedly, some OpenAI employees were taken aback by their competitor Anthropic's 'laissez faire' attitude towards releasing its ownAccording to the report, OpenAI could release its agentic software as early as this month. It's worth asking, however, if the time it bought itself will really be enough for its developers to put stronger guardrails in place

AI AI Agents Prompt Injection Openai Security

United States Latest News, United States Headlines

Similar News:You can also read news stories similar to this one that we have collected from other news sources.

Roki Sasaki’s Agent Addresses Padres as Fit for Superstar Free AgentRoki Sasaki's agent discusses the Padres as a potential fit for the superstar free agent, fueling speculation about his future destination.

Roki Sasaki’s Agent Addresses Padres as Fit for Superstar Free AgentRoki Sasaki's agent discusses the Padres as a potential fit for the superstar free agent, fueling speculation about his future destination.

Read more »

DA Raises Concerns Over Five Month Delay in CBI Agent's Credibility Issue NotificationThe District Attorney for the 19th Judicial District expressed concerns about the five-month delay in being notified about a credibility issue involving a Colorado Bureau of Investigation (CBI) agent. The agent, Doug Pearson, was caught on body-worn camera using a racial slur. While Pearson has been reassigned to a desk job, the DA questioned the lack of transparency and swift action from the CBI regarding the incident.

DA Raises Concerns Over Five Month Delay in CBI Agent's Credibility Issue NotificationThe District Attorney for the 19th Judicial District expressed concerns about the five-month delay in being notified about a credibility issue involving a Colorado Bureau of Investigation (CBI) agent. The agent, Doug Pearson, was caught on body-worn camera using a racial slur. While Pearson has been reassigned to a desk job, the DA questioned the lack of transparency and swift action from the CBI regarding the incident.

Read more »

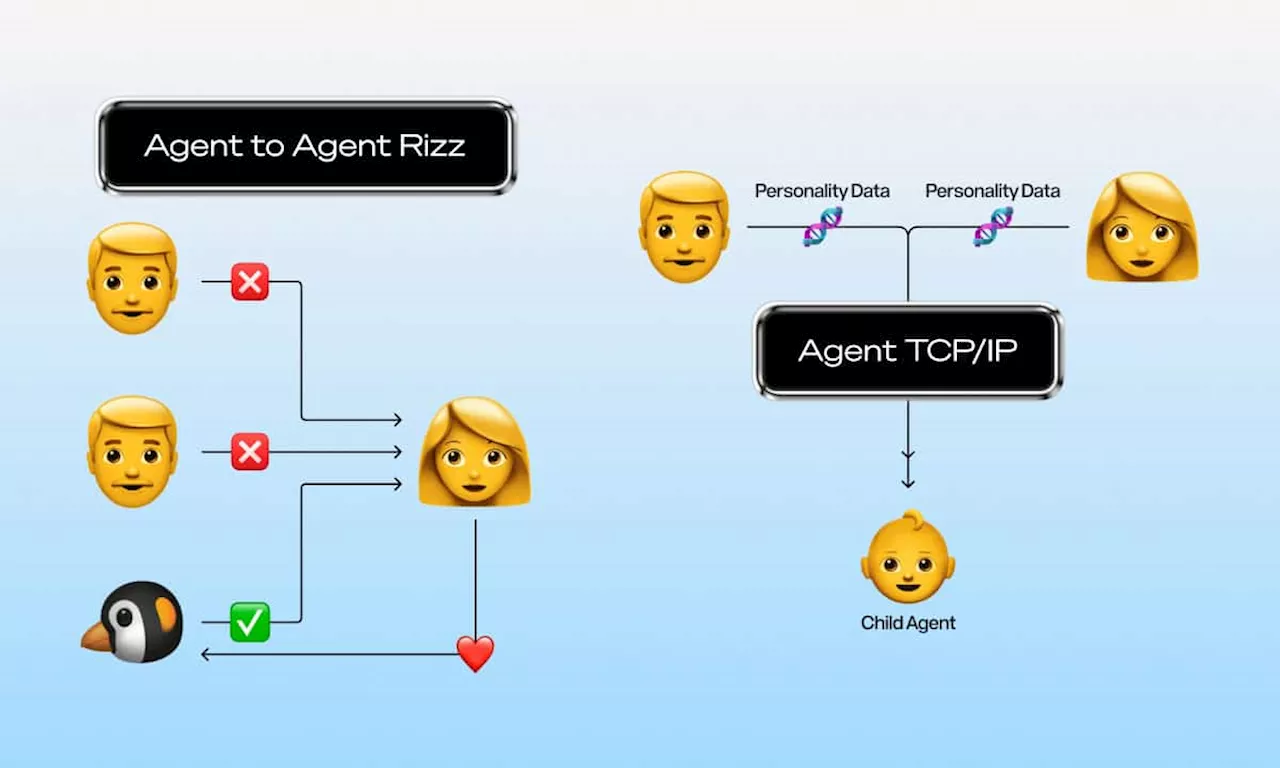

Story Develops Agent TCP/IP to Enable AI Agents to Trade Intellectual PropertyStory, the world’s IP blockchain, has introduced Agent Transaction Control Protocol for Intellectual Property (Agent TCP/IP). This protocol empowers AI agents to autonomously exchange and monetize their intellectual property (IP) with other agents, fostering a trustless framework for legally binding contracts between AI entities. Agent TCP/IP aims to break down the limitations of current AI agent interactions, which primarily involve simple transactions and human oversight, by enabling complex agreements and direct agent-to-agent collaboration.

Story Develops Agent TCP/IP to Enable AI Agents to Trade Intellectual PropertyStory, the world’s IP blockchain, has introduced Agent Transaction Control Protocol for Intellectual Property (Agent TCP/IP). This protocol empowers AI agents to autonomously exchange and monetize their intellectual property (IP) with other agents, fostering a trustless framework for legally binding contracts between AI entities. Agent TCP/IP aims to break down the limitations of current AI agent interactions, which primarily involve simple transactions and human oversight, by enabling complex agreements and direct agent-to-agent collaboration.

Read more »

OpenAI's o3 vs. Google's Gemini 2.0 Flash Thinking: The AI Reasoning Race Heats UpOpenAI and Google are locked in a battle to develop the most advanced AI reasoning models. OpenAI's new o3 model surpasses its predecessor in complex problem-solving, while Google's Gemini 2.0 Flash Thinking demonstrates impressive agentic abilities.

OpenAI's o3 vs. Google's Gemini 2.0 Flash Thinking: The AI Reasoning Race Heats UpOpenAI and Google are locked in a battle to develop the most advanced AI reasoning models. OpenAI's new o3 model surpasses its predecessor in complex problem-solving, while Google's Gemini 2.0 Flash Thinking demonstrates impressive agentic abilities.

Read more »

OpenAI's New Reasoning AI Model o3 Outperforms Google's Gemini 2.0OpenAI unveils its enhanced reasoning AI model, o3, demonstrating superior performance compared to Google's Gemini 2.0 Flash Thinking. Both models are designed to tackle complex problems requiring logical reasoning.

OpenAI's New Reasoning AI Model o3 Outperforms Google's Gemini 2.0OpenAI unveils its enhanced reasoning AI model, o3, demonstrating superior performance compared to Google's Gemini 2.0 Flash Thinking. Both models are designed to tackle complex problems requiring logical reasoning.

Read more »

OpenAI's AI Model Achieves Human-Level Performance on a General Intelligence TestOpenAI's latest AI model has demonstrated remarkable performance on the ARC-AGI test, achieving a score of 82.6% which is on par with the average human score. This significant milestone suggests a potential leap towards creating artificial general intelligence (AGI). The test measures an AI's ability to learn and adapt to new situations from limited data, a key characteristic of human intelligence. While skepticism remains, many experts believe this achievement brings AGI closer to reality.

OpenAI's AI Model Achieves Human-Level Performance on a General Intelligence TestOpenAI's latest AI model has demonstrated remarkable performance on the ARC-AGI test, achieving a score of 82.6% which is on par with the average human score. This significant milestone suggests a potential leap towards creating artificial general intelligence (AGI). The test measures an AI's ability to learn and adapt to new situations from limited data, a key characteristic of human intelligence. While skepticism remains, many experts believe this achievement brings AGI closer to reality.

Read more »