Knowing a little more about how biological vision works can help students to recognize what’s behind the arc of computer vision advancements.

One of the most useful ways to look at new artificial intelligence technologies is to evaluate how they mimic biological designs. We know this at a very basic level when we talk about neural networks.

It’s easier to understand that the basic premise, that individual artificial neurons and layers function like the biological neurons of the human brain, sending signals back and forth.Let’s take the phenomenon of vision that is fundamental to almost everything that we do as humans. When we look at the evolution of vision and the creation of artificial intelligence systems, we see that computer vision is maybe the biggest single piece of the entire puzzle. We also understand how the mechanics of vision work along with the brain’s design, or in the case of AI, the neural network’s design.For a long time, scientists have been tracing the history of biological vision – from smaller and simple organisms to highly evolved species – ultimately, to the human brain, the masterpiece of biological thought. But when it comes to the evolution of AI vision, it’s mostly through the latter part of the 20th century, with items like optical character recognition and the convolutional neural network, which brings such a sophisticated approach to analyzing a field of vision.Delta Flight Crashes In Toronto: 18 Passengers Injured, No Fatalities As Cause Of Incident Is Under Investigation I’m going to use some material from a presentation by my colleague Ramesh Raskar as he was teaching the MIT Ventures class and talking about how vision varies in the biological world. He was showing the students how we approach artificial vision and the context of how it is used.Although mammals have a visual lens on their eyes, he explained, certain types of sea life don’t have lenses, and instead have sort of a “mirror” design. Looking at those differences, you start to see how vision works mechanically, and how it applies to computer vision. He also pointed out that a lot of our vision is composed of cognitive process, where we “fill in the blanks” from what we’re actually seeing. “We’re just making stuff up,” he said, comparing our visual field assembly to generative models like Sora and Dall-E. In short, he showed how your actual visual field is smaller than you think, and how the peripheral is sidelined for a narrower and smaller focal space. That’s not to mention how we fill in around theLater, Ramesh went over advances like edge detection in the 1980s, and explained how they track with some basic biology. The cones of the eye, he pointed out, deal with intensity, where the rods deal with motion, and various filters apply.Starting with Minsky and Papert‘s Perceptron work in 1969, he talked about how people used classical methods and newer technologies to do things like facial recognition . “If I have to detect a face, then all I have to do is look for a blank region at the top, then a slightly darker region under the eyes, look for a vertical line in the middle, and another one,” he explained. “So it's almost like a cartoon representation. But these structures have to appear in this particular way.” He went over how the perception rates pixels, to analyze and produce its findings. He also noted strategies like grouping, matching, the creation of CNN layers, and other aspects of how convolutional neural networks ushered in the age of robust computer vision, and credited Fukushima for “neocognition” contributions in the late 1970s. (IAs some of those building on early computer vision and learning ideas more recently, he cited characters like Geoff Hinton and Yann LeCun looking at more detailed network results. In terms of the technology, more recently, we’ve seen the rise of transformers as an attention mechanism, which goes back to that idea that vision is all about building off of what you actually see, and extrapolating results. “We want you to know about the history, because it tells you how this is going to play out moving forward,” he said to the class. I thought this was a useful idea to think about as we move forward in evaluating what AI systems do in our modern world. That process of evolution informs our use of AI, and knowing about it is essential. So I was happy to see these students take that journey and learn more about what’s behind computer vision. Take time to think about this process and historical contest if you’re trying to work on applications of more modern AI concepts.

United States Latest News, United States Headlines

Similar News:You can also read news stories similar to this one that we have collected from other news sources.

Vision Quest: Exploring the Depths of Vision's HumanityMarvel Studios' upcoming Vision Quest series dives into the aftermath of WandaVision, following Vision's journey to reclaim his memories and rediscover his humanity. The series boasts a stellar cast, including Paul Bettany, James Spader, Faran Tahir, and newcomer Alessandro Mollica.

Vision Quest: Exploring the Depths of Vision's HumanityMarvel Studios' upcoming Vision Quest series dives into the aftermath of WandaVision, following Vision's journey to reclaim his memories and rediscover his humanity. The series boasts a stellar cast, including Paul Bettany, James Spader, Faran Tahir, and newcomer Alessandro Mollica.

Read more »

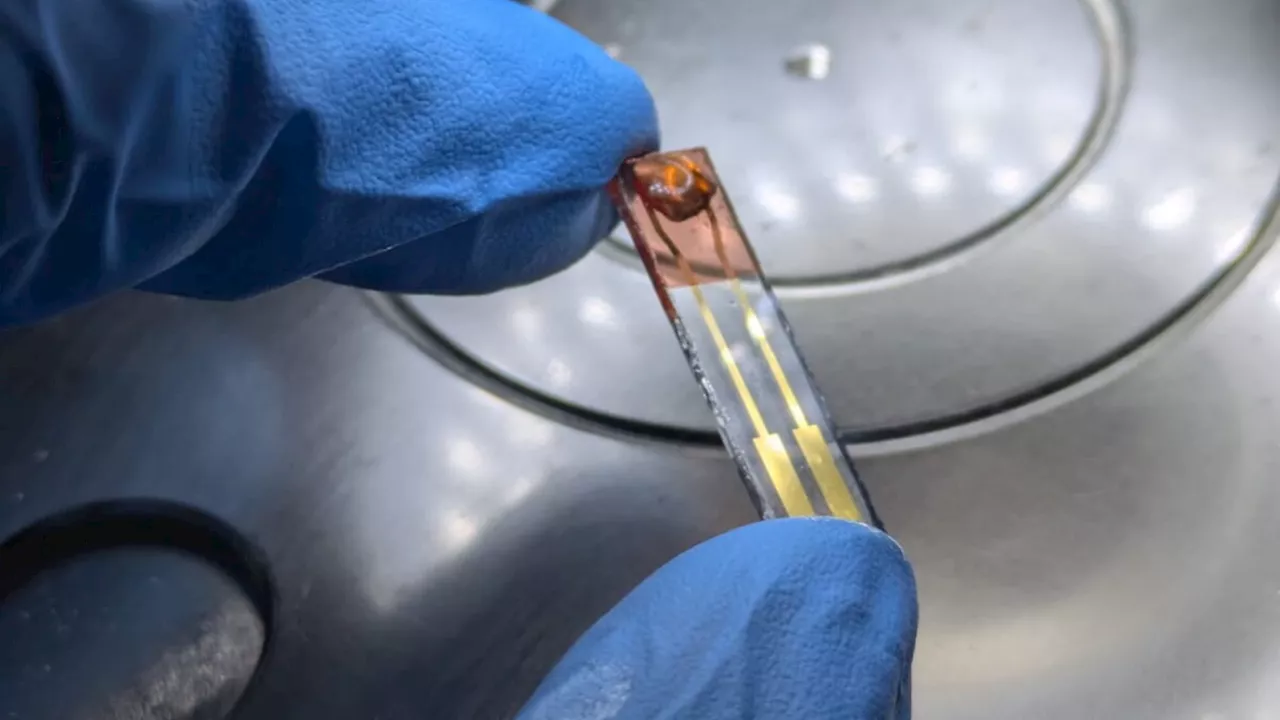

Honey-Powered Artificial Vision: A Sustainable Approach to AIResearchers at the University of Glasgow have developed a new artificial vision system inspired by the human brain and powered in part by honey. This energy-efficient device, called EGOFET, uses biodegradable and recyclable materials, addressing the environmental concerns associated with traditional silicon-based technology.

Honey-Powered Artificial Vision: A Sustainable Approach to AIResearchers at the University of Glasgow have developed a new artificial vision system inspired by the human brain and powered in part by honey. This energy-efficient device, called EGOFET, uses biodegradable and recyclable materials, addressing the environmental concerns associated with traditional silicon-based technology.

Read more »

Omega-3s & Vitamin D Shown To Slow Biological Aging in New StudyA new study published in Nature Aging reveals that the combination of omega-3 fatty acids and vitamin D supplements may effectively slow down biological aging. The study utilized epigenetic clocks to measure biological age and found that participants who took these supplements experienced a measurable reduction in aging markers compared to those who did not.

Omega-3s & Vitamin D Shown To Slow Biological Aging in New StudyA new study published in Nature Aging reveals that the combination of omega-3 fatty acids and vitamin D supplements may effectively slow down biological aging. The study utilized epigenetic clocks to measure biological age and found that participants who took these supplements experienced a measurable reduction in aging markers compared to those who did not.

Read more »

Biological aging may not be driven by what we thoughtNicoletta Lanese is the health channel editor at Live Science and was previously a news editor and staff writer at the site. She holds a graduate certificate in science communication from UC Santa Cruz and degrees in neuroscience and dance from the University of Florida.

Biological aging may not be driven by what we thoughtNicoletta Lanese is the health channel editor at Live Science and was previously a news editor and staff writer at the site. She holds a graduate certificate in science communication from UC Santa Cruz and degrees in neuroscience and dance from the University of Florida.

Read more »

Understanding Biological Stress: A Path to EquilibriumThis article explores the biological mechanisms behind stress, focusing on the role of cortisol and the HPA axis. It explains the concept of allostasis and how chronic stress can disrupt this balance. The text also offers practical suggestions for managing stress through mindfulness and self-care practices, emphasizing that even small changes can make a significant difference.

Understanding Biological Stress: A Path to EquilibriumThis article explores the biological mechanisms behind stress, focusing on the role of cortisol and the HPA axis. It explains the concept of allostasis and how chronic stress can disrupt this balance. The text also offers practical suggestions for managing stress through mindfulness and self-care practices, emphasizing that even small changes can make a significant difference.

Read more »

Learning from Biology: The Evolution of AI VisionThis article explores how understanding biological vision can shed light on the development of artificial intelligence, particularly in the field of computer vision. It examines the similarities and differences between how humans and machines process visual information, drawing parallels between biological and artificial neural networks.

Learning from Biology: The Evolution of AI VisionThis article explores how understanding biological vision can shed light on the development of artificial intelligence, particularly in the field of computer vision. It examines the similarities and differences between how humans and machines process visual information, drawing parallels between biological and artificial neural networks.

Read more »