Agents, chatbots and other tools based on artificial intelligence (AI) are increasingly used in everyday life by many. So-called large language model (LLM)-based agents, such as ChatGPT and Llama, have become impressively fluent in the responses they form, but quite often provide convincing yet incorrect information.

Agents, chatbots and other tools based on artificial intelligence are increasingly used in everyday life by many. So-called large language model -based agents, such as ChatGPT and Llama, have become impressively fluent in the responses they form, but quite often provide convincing yet incorrect information.

Researchers draw parallels between this issue and a human language disorder known as aphasia, where sufferers may speak fluently but make meaningless or hard-to-understand statements. This similarity could point toward better forms of diagnosis for aphasia, and even provide insight to AI engineers seeking to improve LLM-based agents. Researchers at the University of Tokyo draw parallels between this issue and a human language disorder known as aphasia, where sufferers may speak fluently but make meaningless or hard-to-understand statements. This similarity could point toward better forms of diagnosis for aphasia, and even provide insight to AI engineers seeking to improve LLM-based agents. This article was written by a human being, but the use of text-generating AI is on the rise in many areas. As more and more people come to use and rely on such things, there's an ever-increasing need to make sure that these tools deliver correct and coherent responses and information to their users. Many familiar tools, including ChatGPT and others, appear very fluent in whatever they deliver. But their responses cannot always be relied upon due to the amount of essentially made-up content they produce. If the user is not sufficiently knowledgeable about the subject area in question, they can easily fall foul of assuming this information is right, especially given the high degree of confidence ChatGPT and others show. "You can't fail to notice how some AI systems can appear articulate while still producing often significant errors," said Professor Takamitsu Watanabe from the International Research Center for Neurointelligence at the University of Tokyo."But what struck my team and I was a similarity between this behavior and that of people with Wernicke's aphasia, where such people speak fluently but don't always make much sense. That prompted us to wonder if the internal mechanisms of these AI systems could be similar to those of the human brain affected by aphasia, and if so, what the implications might be." To explore this idea, the team used a method called energy landscape analysis, a technique originally developed by physicists seeking to visualize energy states in magnetic metal, but which was recently adapted for neuroscience. They examined patterns in resting brain activity from people with different types of aphasia and compared them to internal data from several publicly available LLMs. And in their analysis, the team did discover some striking similarities. The way digital information or signals are moved around and manipulated within these AI models closely matched the way some brain signals behaved in the brains of people with certain types of aphasia, including Wernicke's aphasia. "You can imagine the energy landscape as a surface with a ball on it. When there's a curve, the ball may roll down and come to rest, but when the curves are shallow, the ball may roll around chaotically," said Watanabe."In aphasia, the ball represents the person's brain state. In LLMs, it represents the continuing signal pattern in the model based on its instructions and internal dataset." The research has several implications. For neuroscience, it offers a possible new way to classify and monitor conditions like aphasia based on internal brain activity rather than just external symptoms. For AI, it could lead to better diagnostic tools that help engineers improve the architecture of AI systems from the inside out. Though, despite the similarities the researchers discovered, they urge caution not to make too many assumptions. "We're not saying chatbots have brain damage," said Watanabe."But they may be locked into a kind of rigid internal pattern that limits how flexibly they can draw on stored knowledge, just like in receptive aphasia. Whether future models can overcome this limitation remains to be seen, but understanding these internal parallels may be the first step toward smarter, more trustworthy AI too."Researchers have introduced a technique for compressing a large language model's reams of data, which could increase privacy, save energy and lower costs. The new algorithm works by trimming ... A new study reveals how large language models respond to different motivational states. In their evaluation of three LLM-based generative conversational agents --ChatGPT, Google Bard, and ... The era of artificial-intelligence chatbots that seem to understand and use language the way we humans do has begun. Under the hood, these chatbots use large language models, a particular kind of ... LLM-based generative chat tools, such as ChatGPT or Google's MedPaLM have great medical potential, but there are inherent risks associated with their unregulated use in healthcare. A new article ...Study Shows Vision-Language Models Can't Handle Queries With Negation Words

Intelligence Brain Injury Perception Neural Interfaces Statistics Computer Modeling Mathematical Modeling

United States Latest News, United States Headlines

Similar News:You can also read news stories similar to this one that we have collected from other news sources.

AI agents like ChatGPT will soon shop for you thanks to new techAI agents like ChatGPT might soon shop online for goods for the user, and Visa, Mastercard, and PayPal have credit card tech ready for them.

AI agents like ChatGPT will soon shop for you thanks to new techAI agents like ChatGPT might soon shop online for goods for the user, and Visa, Mastercard, and PayPal have credit card tech ready for them.

Read more »

Sam Altman, the architect of ChatGPT, is rolling out a device that verifies you're humanAltman's World venture wants to convince people to scan their eyeballs to prove they're human amidst a proliferation of AIs and bots.

Sam Altman, the architect of ChatGPT, is rolling out a device that verifies you're humanAltman's World venture wants to convince people to scan their eyeballs to prove they're human amidst a proliferation of AIs and bots.

Read more »

Sam Altman, the architect of ChatGPT, is rolling out an orb that verifies you're humanAltman's World venture wants to convince people to scan their eyeballs to prove they're human amidst a proliferation of AIs and bots.

Sam Altman, the architect of ChatGPT, is rolling out an orb that verifies you're humanAltman's World venture wants to convince people to scan their eyeballs to prove they're human amidst a proliferation of AIs and bots.

Read more »

The AI Model Showdown — Which LLM Deserves Your Trust?Discover how ChatGPT, Gemini, Claude and Perplexity compare in performance, security and enterprise readiness. Choosing the right AI model is now an existential decision.

The AI Model Showdown — Which LLM Deserves Your Trust?Discover how ChatGPT, Gemini, Claude and Perplexity compare in performance, security and enterprise readiness. Choosing the right AI model is now an existential decision.

Read more »

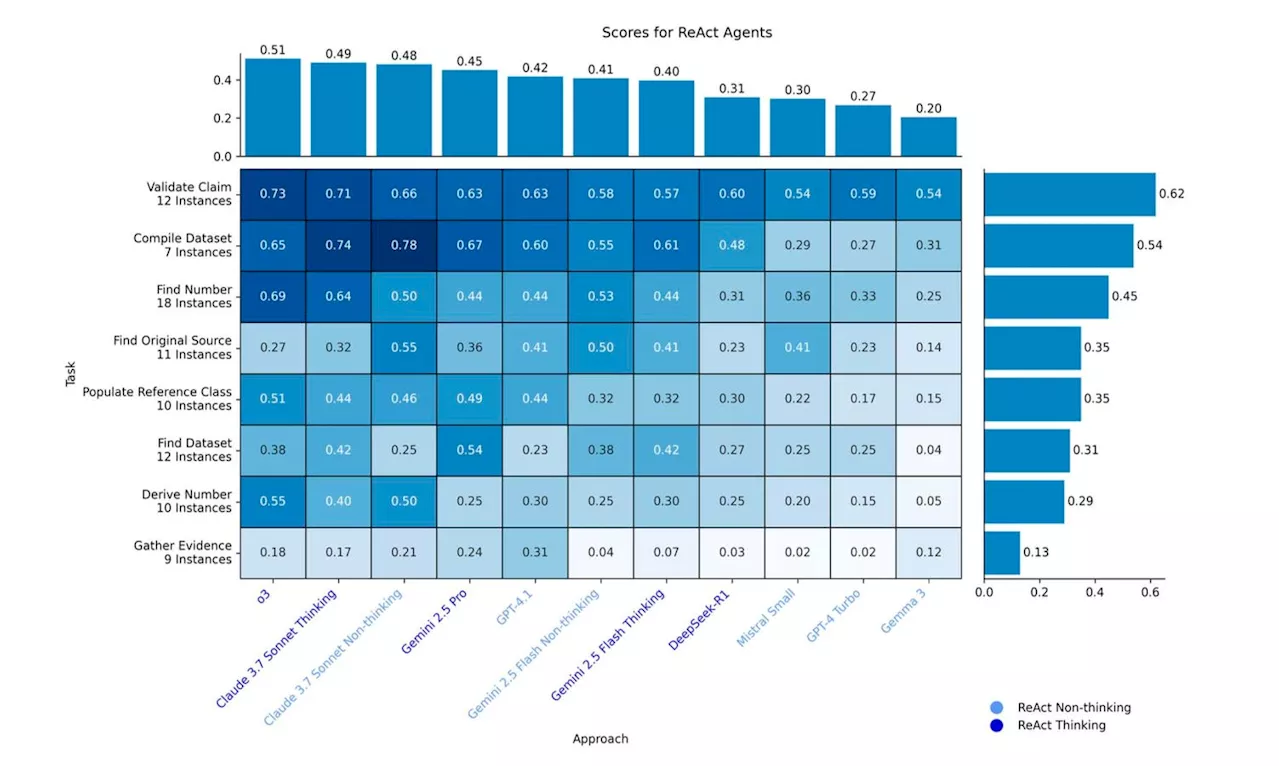

ChatGPT Beats Claude, Google’s Gemini, DeepSeek In Test Of AI AgentsAI agents are getting better. But while ChatGPT's o3 model is the best, there remain issues, and the best humans still outperform generative AI research solutions.

ChatGPT Beats Claude, Google’s Gemini, DeepSeek In Test Of AI AgentsAI agents are getting better. But while ChatGPT's o3 model is the best, there remain issues, and the best humans still outperform generative AI research solutions.

Read more »

ChatGPT is my therapist — it's more qualified than any human could beToday's Video Headlines: 05/13/25

ChatGPT is my therapist — it's more qualified than any human could beToday's Video Headlines: 05/13/25

Read more »