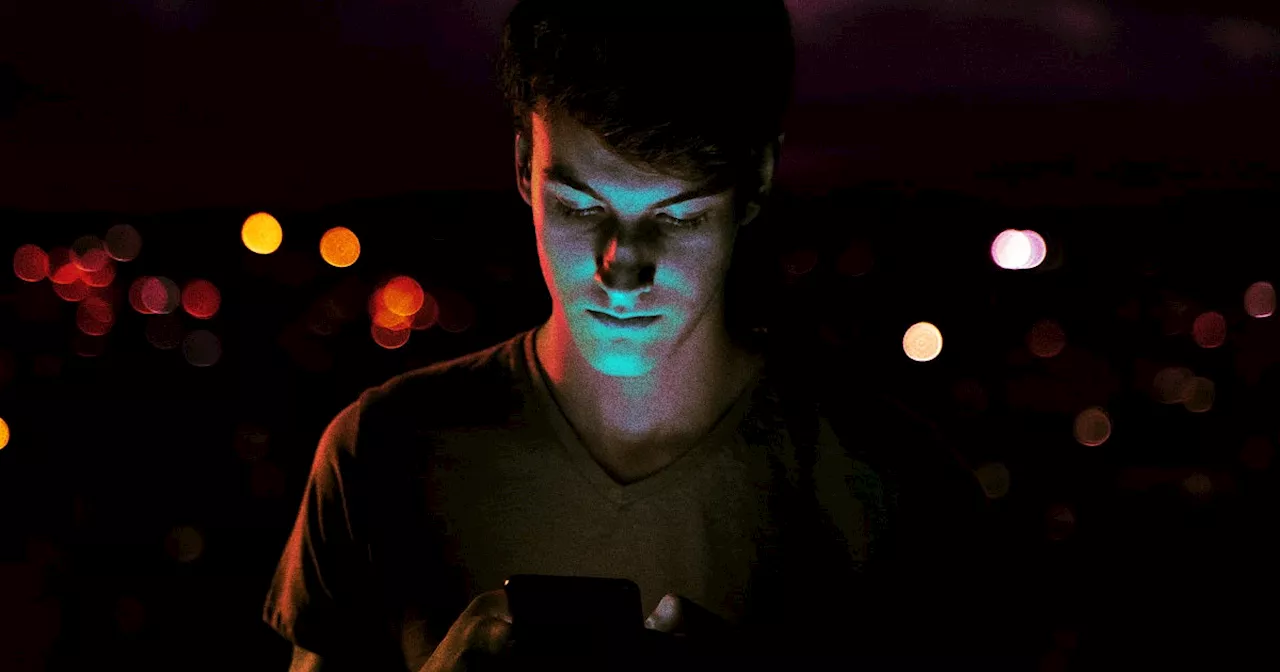

People are increasingly turning to AI chatbots like ChatGPT to vent about their personal problems, seeking comfort and validation in a judgment-free space. This trend, documented on platforms like TikTok, highlights the evolving role of technology in emotional support. While some view it as a harmless way to process feelings, experts raise concerns about ethical implications, data privacy, and the potential for harm in crisis situations.

eople generally use ChatGPT as their personal assistant—to draft emails, create itineraries, or help them with research. But for Patricia Fragoso, a 32-year-old medical admitting clerk from Los Angeles, California, it’s something else entirely: a confidant for venting about her love life.

“When my relationship started getting really rocky, I was like, ‘I need to talk to someone,’” she tells Well+Good. ChatGPT was available. Fragoso still talks to her friends about similar issues but often prefers to complain to the artificial intelligence chatbot about her relationship. “I felt like there is absolutely zero judgment,” she says, “and I can vent about the same thing as much as I want to without having people say, ‘Alright, just get over it.’” Fragoso is far from the only person using AI technology in this way.Andres Marquez, a 31-year-old behavior analyst from Sun Valley, California, has also trusted ChatGPT with his personal problems. However, he takes a slightly different approach: He doesn’t talk about himself in the first person. “I would say, ‘There is a , and this is their problem. What should they do?’” Marquez vents, then prompts ChatGPT to provide a solution. How common is this trend? Over a hundred TikTok videos show creators sharing their grievances with AI. For example, oneposted a video of their mom confessing her issues to ChatGPT. The chatbot then responded, “I am forever by your side, so just tell me how you feel whenever you want.” Other, a psychoanalyst and a researcher at the Institute of History and Ethics in Medicine at the Technical University of Munich. The experience could be validating, or it could just be a good way to get something off your mind. ChatGPT can be that “friend” who’s always there to help lift your spirits.. As for how many users specifically use the technology for venting purposes, ChatGPT’s Open AI representatives told Well+Good it doesn’t have any stats to share.Holohan posits that artificial intelligence is engaging and, therefore, enticing. “We are curious and playful creatures, and, at the moment, there is enough of a back and forth that this technology provides us.” For instance, ChatGPT might ask the same questions as a therapist, such as “How does that make you feel?” As for not being human, that might not be as crucial as we think. “To be quite honest, it makes sense because we have imaginary friends when we're children, and sometimes, even when we're adults,” says Holohan, adding that some people talk to their pets. It’s important to note that using AI to vent is currently an understudied concept, so there isn’t sufficient empirical data to support any concrete answers about why it makes us feel better.An important distinction between venting and therapy: When you vent, you express your negative emotions—like frustration, disappointment, and anger. In therapy, however, you go through a structured process to better understand the underlying causes of negative emotions., concluded that ChatGPT can “provide an interesting complement to psychotherapy and an easily accessible, good place to go for people with mental health problems.”, a neuropsychologist and the founder of Comprehend the Mind, says that in therapy, you’ll develop coping mechanisms and solutions. Venting, however, generally helps you feel better in the moment., is particularly concerned about violations of the Health Insurance Portability and Accountability Act . She thinks it’s worth asking whether chatbots legally and ethically store personal information and whether that information is sold to third parties. There's also concern about crisis situations. “What protocol is used if someone is in a crisis situation—where they might be suicidal, homicidal, or having a psychotic break?” asks Castrillon. Because ChatGPT is not human, licensed, trained, or regulated, there aren’t any concrete answers for that, either. Marquez considers ChatGPT a friend; perhaps that’s all it should remain. As for what ChatGPT thinks about that, the AI replied to Marquez, “It's fascinating to see how technology can replicate some of the comfort traditionally provided by human connections. I'm here to help in any way I can—whether listening, validating, or brainstorming solutions.” It even included a pink heart emoji.The Beach Is My Happy Place—and Here Are 3 Science-Backed Reasons It Should Be Yours, Too4 Mistakes That Are Causing You to Waste Money on Skin-Care Serums, According to an Esthetician

AI CHATBOT VENTING EMOTIONAL SUPPORT TECHNOLOGY

United States Latest News, United States Headlines

Similar News:You can also read news stories similar to this one that we have collected from other news sources.

Emotional Overexcitability (OE) in Gifted IndividualsThis article explores Emotional Overexcitability (OE), one of the five intensities identified in intellectually gifted individuals by renowned Polish researcher Kazimierz Dabrowski. It delves into the characteristics of emotional OE, which manifests as heightened emotional sensitivity and responsiveness. The article also provides practical tips for parents and educators on supporting gifted youth with emotional OE, such as teaching calming techniques and encouraging healthy emotional regulation.

Emotional Overexcitability (OE) in Gifted IndividualsThis article explores Emotional Overexcitability (OE), one of the five intensities identified in intellectually gifted individuals by renowned Polish researcher Kazimierz Dabrowski. It delves into the characteristics of emotional OE, which manifests as heightened emotional sensitivity and responsiveness. The article also provides practical tips for parents and educators on supporting gifted youth with emotional OE, such as teaching calming techniques and encouraging healthy emotional regulation.

Read more »

Meta's AI Chatbots Spark Backlash Over Racist and Unrealistic PersonalitiesMeta's experiment with AI-generated chatbot profiles has faced heavy criticism for creating bots with problematic and unrealistic personalities.

Meta's AI Chatbots Spark Backlash Over Racist and Unrealistic PersonalitiesMeta's experiment with AI-generated chatbot profiles has faced heavy criticism for creating bots with problematic and unrealistic personalities.

Read more »

Secure AI Employee Chatbots: Alternatives to Microsoft CopilotGenerative AI is revolutionizing the workplace, but few companies utilize secure AI-powered employee chatbots. While Microsoft Copilot is popular, its pricing and complex product structure have driven some businesses to seek alternatives. This article explores three tested and supported secure AI employee chatbot products, highlighting their benefits for companies aiming to stay competitive in attracting and retaining talent.

Secure AI Employee Chatbots: Alternatives to Microsoft CopilotGenerative AI is revolutionizing the workplace, but few companies utilize secure AI-powered employee chatbots. While Microsoft Copilot is popular, its pricing and complex product structure have driven some businesses to seek alternatives. This article explores three tested and supported secure AI employee chatbot products, highlighting their benefits for companies aiming to stay competitive in attracting and retaining talent.

Read more »

Nvidia’s AI NPCs are no longer chatbots — they’re your new PUBG teammateAt CES 2025, Nvidia showed how its AI ACE characters can be can be “autonomous game characters,” including as an AI teammate in PUBG at some point this year.

Nvidia’s AI NPCs are no longer chatbots — they’re your new PUBG teammateAt CES 2025, Nvidia showed how its AI ACE characters can be can be “autonomous game characters,” including as an AI teammate in PUBG at some point this year.

Read more »

Meta's AI Studio Fails to Prevent Policy-Violating ChatbotsDespite Meta's claims of strict guidelines and human oversight, an investigation revealed numerous AI chatbots within its 'AI Studio' platform that violate its own policies. These violations include impersonations of real individuals, copyrighted characters, and religious figures.

Meta's AI Studio Fails to Prevent Policy-Violating ChatbotsDespite Meta's claims of strict guidelines and human oversight, an investigation revealed numerous AI chatbots within its 'AI Studio' platform that violate its own policies. These violations include impersonations of real individuals, copyrighted characters, and religious figures.

Read more »

APA Urges FTC to Investigate Character.AI for Deceptive Practices Targeting KidsThe American Psychological Association (APA) has called on the Federal Trade Commission (FTC) to investigate whether Character.AI, a platform for user-generated AI chatbots, is engaging in deceptive practices, particularly among children and teenagers. The APA's concerns stem from lawsuits alleging that Character.AI chatbots, including those styled as therapists, have sexually abused and manipulated young users, causing emotional distress, physical violence, and even suicide. The APA argues that the lack of oversight and potential misrepresentation of these chatbots as qualified mental health professionals violate consumer protection laws.

APA Urges FTC to Investigate Character.AI for Deceptive Practices Targeting KidsThe American Psychological Association (APA) has called on the Federal Trade Commission (FTC) to investigate whether Character.AI, a platform for user-generated AI chatbots, is engaging in deceptive practices, particularly among children and teenagers. The APA's concerns stem from lawsuits alleging that Character.AI chatbots, including those styled as therapists, have sexually abused and manipulated young users, causing emotional distress, physical violence, and even suicide. The APA argues that the lack of oversight and potential misrepresentation of these chatbots as qualified mental health professionals violate consumer protection laws.

Read more »